High-performing teams vs. not-invented-here syndrome

Sep 3 2023 · Software Engineering

A few months ago, being particularly frustrated by yet-another-bug and yet-another-limitation of a library used in one of my team’s systems, I remembered a story about the Excel dev team and dug up In Defense of Not-Invented-Here Syndrome, which I read years ago. I didn’t think much of the essay when I first read it but now, having been in the industry for a while, I have a greater appreciation for it.

NIH syndrome is generally looked at in a negative light and for good reason; companies and teams that are too insular and reject ideas or technologies from the outside can find themselves behind the curve. However, there’s a spectrum here and, at the opposite end, heedless adoption of things from the outside can put companies and teams in an equally precarious position.

So, back to the story of the Excel development team:

“The Excel development team will never accept it,” he said. “You know their motto? ‘Find the dependencies — and eliminate them.’ They’ll never go for something with so many dependencies.”

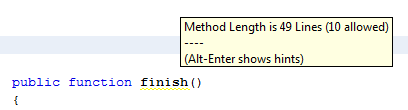

Dealing with dependencies is a reality of software engineering, perhaps even more-so now than in the past, and for good reason, there’s a world of functionality that can simply be plugged into a project, saving significant amounts of time and energy. However, there’s a number of downsides as well:

- Your team doesn’t control control the evolution or lifecycle of that dependency

- Your team doesn’t control the quality of that that dependency

- Your team doesn’t have knowledge of how that dependency does what it does

When something breaks or you hit a limitation, your team is suddenly spending a ton of time trying to debug an issue that originates from a codebase they’re not familiar with and, once there’s an understanding of the issue, coding some ugly hack to get the dependency to behave in a more reasonable way. So when a team has the resources it’s not unreasonable to target elimination of dependencies for:

- A healthier codebase

- A codebase that is more easily understood and can be reasoned about

These 2 points invariably lead to a higher performing team. In the case of the Excel dev team:

The Excel team’s ruggedly independent mentality also meant that they always shipped on time, their code was of uniformly high quality, and they had a compiler which, back in the 1980s, generated pcode and could therefore run unmodified on Macintosh’s 68000 chip as well as Intel PCs.

Finally, Joel’s recommendation on what shouldn’t be a dependency and be done in-house:

Pick your core business competencies and goals, and do those in house.

This makes sense and resonates with me. Though there is a subtle requirement here that I’ve seen overlooked: engineering departments and teams need to distill business competencies and goals (hopefully, these exist and are sensible) into technical competencies and goals. Without that distillation, engineering is rudderless; teams pull in dependencies for things that should be built internally, while others sink time into building things from scratch that will never get the business resources to be properly developed or maintained.